The state of AI for chip design (NeurIPS 2024)

“Can a custom chip be designed by a few people, in a few weeks?”

That’s the central question Jeff Dean asked at NeurIPS 2024.1 The answer right now (for all commercial purposes) is an emphatic No.

For reference, Nvidia’s Blackwell chip cost a staggering $10B to design. Today's hottest AI chip startups have also collectively raised billions to mount a challenge.

Designing (& verifying) a new custom chip currently takes hundreds of engineers and years of agonising R&D.

But could that be changing with novel applications of ML? Will chip design see a big productivity boost the way SWE has from codegen so far?

More attention is on chip design at this year’s NeurIPS than ever before. Here are 5 interesting papers that give us a glimpse into how we might get to end-to-end AI-automated chip development:

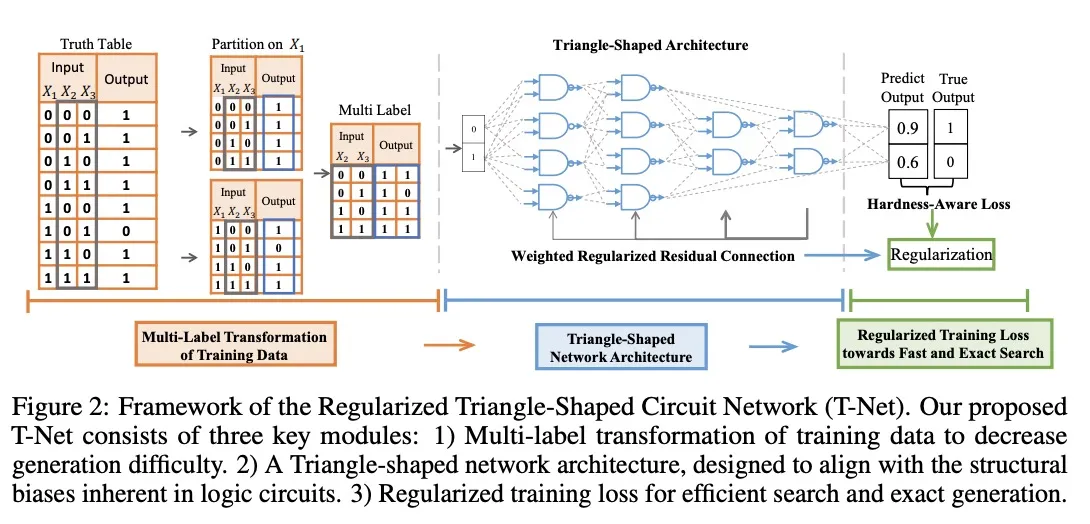

1. Towards Next-Generation Logic Synthesis: A Scalable Neural Circuit Generation Framework

Logic Synthesis (LS) is a process in circuit design where a high-level description of the hardware is translated down to a lower-level representation of logic gates while optimising for performance criteria like speed, area, and power efficiency.

Existing methods of LS have 3 main pitfalls: their outputs can be inaccurate, they scale poorly for larger circuits, and they’re very sensitive to initial parameter settings.

They propose a framework called T-Net, which is more accurate and scalable and outperforms industry-standard LS methods.

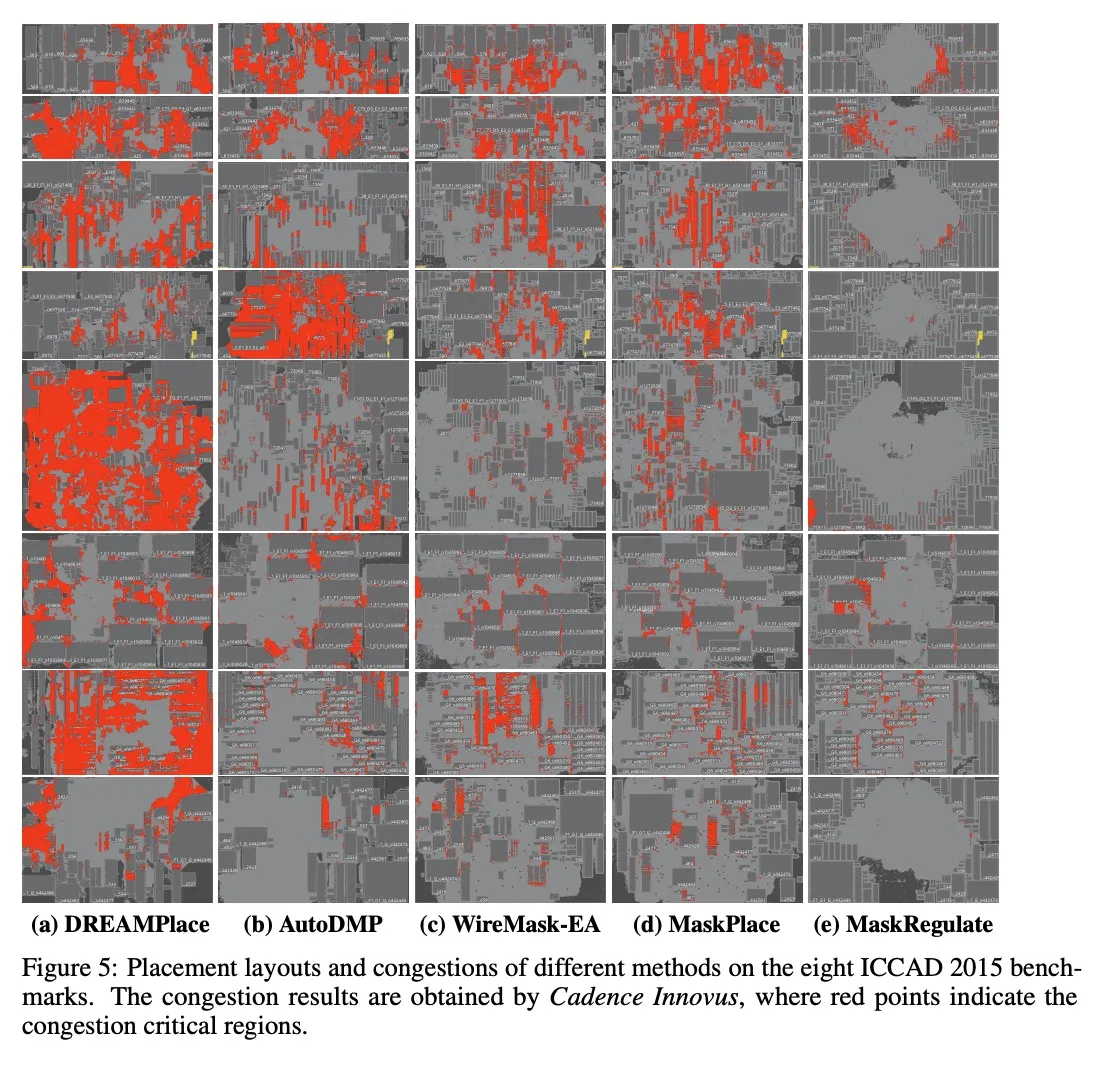

2. Reinforcement Learning Policy as Macro Regulator Rather than Macro Placer

After LS, you need to figure out where cells, memory, etc. physically go on the chip, in a step called “placement.”

Reinforcement learning (RL) is already commonly used here, but they’re trained to place a chip from scratch.

They propose training RL to refine existing layouts (“Macro Regulator”) instead of generating new ones (“Macro Placer”). They show that the regulator approach results in placements with less congestion (shown in red).

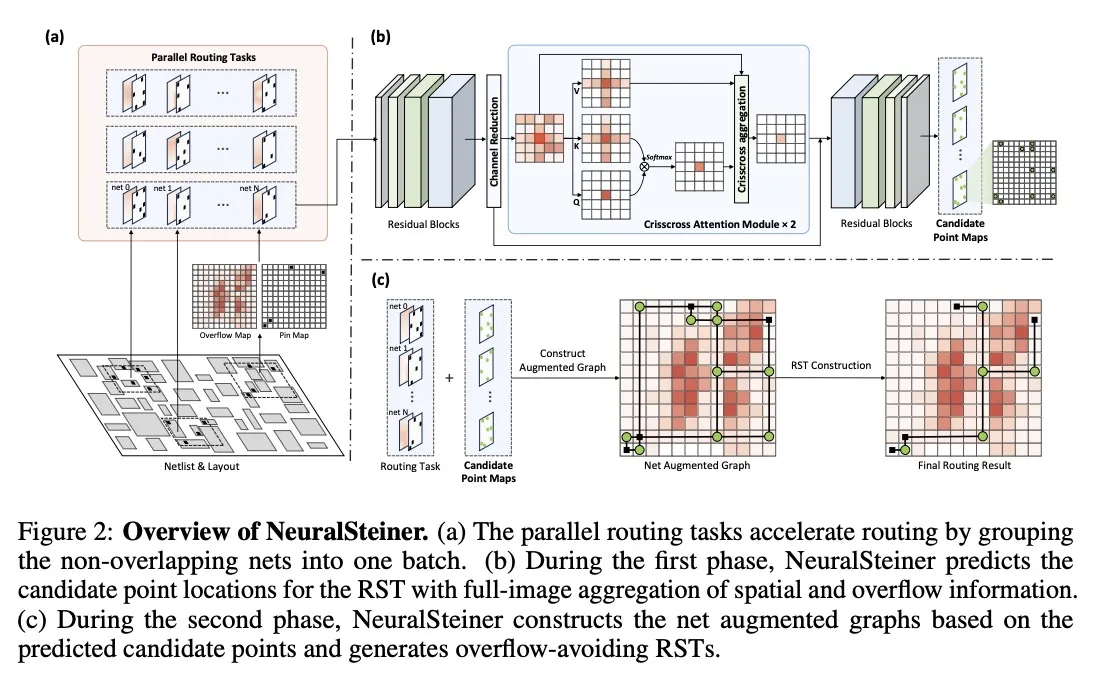

3. NeuralSteiner: Learning Steiner Tree for Overflow-avoiding Global Routing in Chip Design

After placement, you have to route the chip, i.e. connect the components with wires. Chip performance suffers if you jam too many wires through the same area, called an “overflow.”

They introduce NeuralSteiner, an RL-based approach to global routing that avoids overflows.

Rather than directly generating complete routes, they first use AI to predict where optimal connection points are likely to be, use an attention mechanism to consider the performance of the full chip layout, and then connect the points with a greedy graph algorithm.

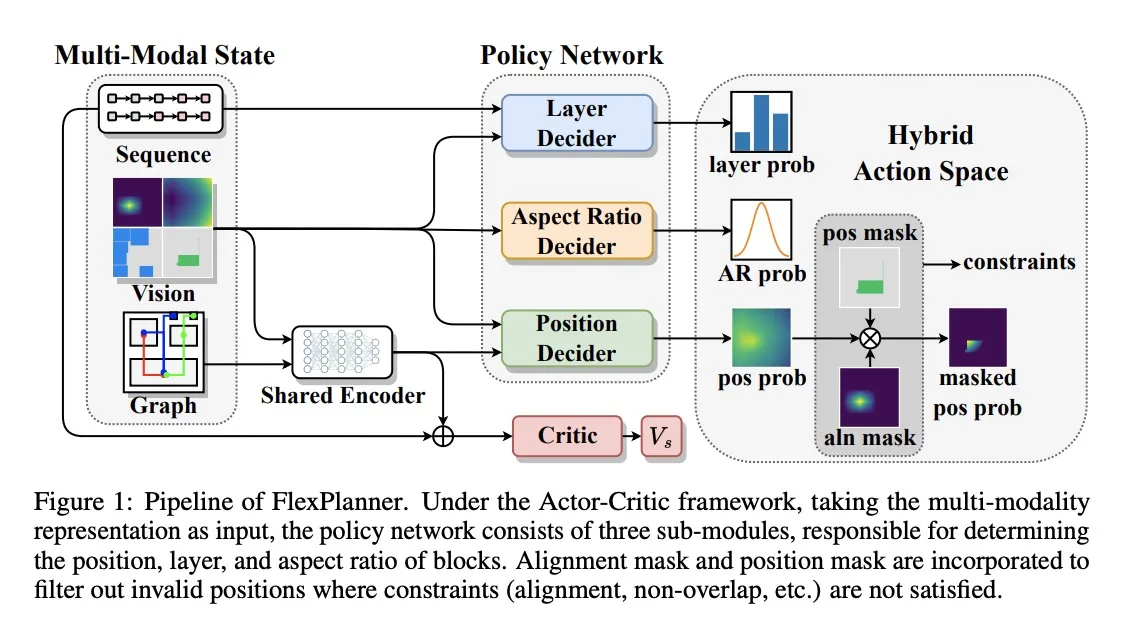

4. FlexPlanner: Flexible 3D Floorplanning via Deep Reinforcement Learning in Hybrid Action Space with Multi-Modality Representation

Guess what? Chips are growing vertically! “3D ICs” pack more transistors in the same area by stacking layers on top of each other. This makes floorplanning (coming up with the blueprint for physical design) far trickier.

Traditional heuristic-based approaches (think “tricks” that designers over the years have discovered) are not flexible enough to accommodate floorplanning for 3D ICs.

FlexPlanner solves this with deep learning. It’s also multimodal, so it sees the plan in multiple ways (visual, graph, sequence of blocks), like a human designer would.

5. Synthetic Programming Elicitation for Text-to-Code in Very Low-Resource Programming and Formal Languages

LLMs have gotten quite good at writing code in popular programming languages like Python, but they struggle with more obscure languages.

This is bad news for chip design because most of the code is written in languages that are not in any training set (e.g. SystemVerilog).

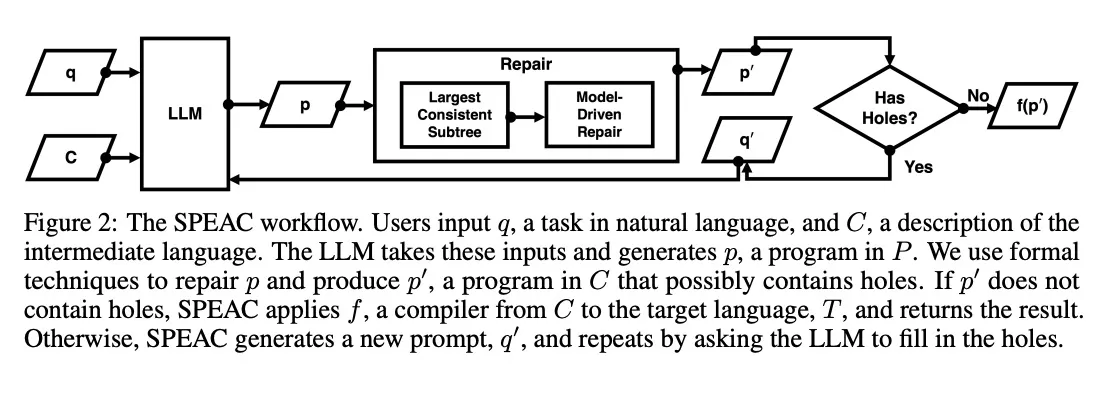

They came up with a clever workaround: Let LLM write in a familiar language first, convert that code to the target language, then get the LLM to fix any gaps or bugs.

Could this work for writing HDL code, scripts for legacy tool chains, etc.? TBD!

Conclusion

Overall, these papers + related efforts (like Google’s AlphaChip) show that AI can already do big chunks of the “backend” of chip design (floorplanning, placement, routing). There are also promising threads to pull on the synthesis and codegen front.

I would’ve loved to see more research into chip verification, SW/HW co-design, and how AI can enhance the developer experience (not just faster or cheaper, but ways to induce more flow state & creativity for human engineers).

What did I miss? Over 4,000 papers were presented at NeurIPS 2024, so I obviously didn’t have time to go through everything. Reach out if you have any questions or want to chat.

What a great week for chips!

To be exact, Jeff raised this question at the “AI for EDA Workshop” that was running parallel to NeurIPS, in a talk titled: “A Sketch of How to Move Towards an End-to-End AI-Automated Chip Design Process”↩